VSS 2026 begins tomorrow! We are pleased to announce invited speakers at this year’s workshop.

Dr. Judy Fan is an Assistant Professor of Psychology at Stanford University. Her CogTools Lab aims to reverse engineer the human cognitive toolkit—in particular, how people use physical representations of thought to learn, communicate, and solve problems. They use a combination of approaches from cognitive science, computational neuroscience, and artificial intelligence to achieve deeper understanding of quintessentially human ways of thinking and imagining. Dr. Fan’s work spans a huge variety of topics, often bridging cognitive science, vision science, and visualization research.

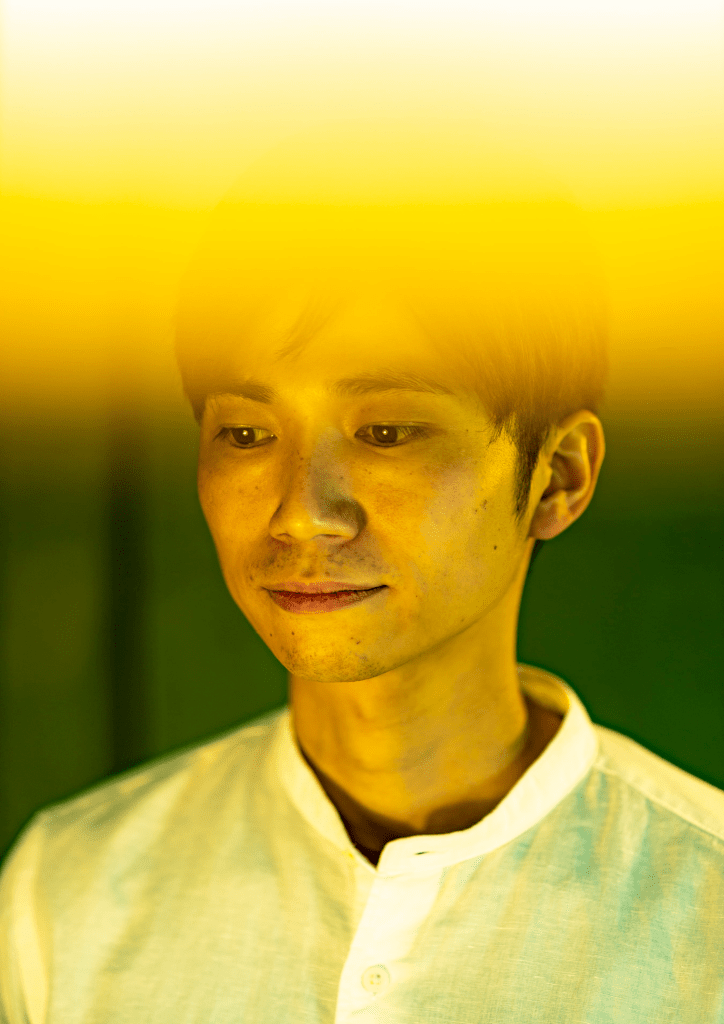

Michael A. Cohen is an Assistant Professor in the Department of Psychology and Program in Neuroscience at Amherst College. His research focuses on understanding the limits of perception, memory, and cognition using fMRI, EEG, psychophysics, and computational modeling. He asks questions like, “what is the capacity of visual cognition?” and “what is the bandwidth of perceptual experience?”